Digital compliance is now a human safety issue: it belongs in the stack, not an audit report.

FairPatterns is a multimodal AI that detects and fixes digital harms across websites, apps and social media, including dark patterns, addictive design and predatory design.

Detect predatory design

Our multimodal AI scans websites, apps and social media across the full spectrum of digital harm, from dark patterns and addictive design to consent manipulation and exploitative practices. It maps each harmful design to its corresponding legal violations.

Fix it directly in your stack

FairPatterns is a living library that embeds human safety, legal and fairness requirements directly into the design and tech stack, turning compliance into code, and fair design into the path of least resistance.

Monitor digital risks across jurisdictions

FairPatterns sits inside Figma and your AI development workflow as a mandatory evaluation layer, ensuring no interface reaches users without being assessed for manipulative or addictive design.

The authenticated evidence layer for enforcement against manipulative and addictive design, at scale.

From scan to courtroom-ready evidence: FairPatterns' multimodal AI detects manipulative and addictive design across websites, apps, and social media, generating authenticated intelligence for litigation and regulatory enforcement.

Enshrine the rule of law into the digital world, for real

Identify addictive design, dark patterns and deceptive practices across digital platforms with our multimodal AI.

Harmful design intelligence for strategic enforcement

FairPatterns' AI analyzes interfaces, policies, and user flows generating authenticated, litigation-grade evidence ready for investigations and enforcement actions.

Always-on compliance monitoring for the digital regulation era.

Enforcement has always been reactive. FairPatterns changes that: continuously monitoring digital platforms to detect violations and protect consumers before harm compounds.

FairPatterns arms compliance teams and litigators with packaged evidence and intelligence at scale.

Our multimodal AI analyzes digital interfaces, policies and user flows to detect addictive design and manipulation and generate authenticated evidence for compliance and litigation cases.

Digital platforms move faster than compliance audits. FairPatterns closes that gap with AI.

Legal risk assessment across digital platforms is slow, incomplete, and expensive. FairPatterns changes that: scanning for addictive design, dark patterns, privacy violations, and deceptive practices, and mapping every finding directly to applicable regulations.

AI-powered insights that sharpen strategy, strengthen arguments, and surface evidence.

FairPatterns gives law firms science-backed, authenticated AI-powered insights into addictive design, dark patterns, and user behavior, the evidentiary edge that wins digital manipulation cases.

Harmful design User Testing Lab

FairPatterns' User Testing Lab generates scientific, court-ready evidence of online manipulation and addictive design, scored against 100 objective criteria through our proprietary Exploitation Design Index.

Solutions

Deceptive Design AI Agent

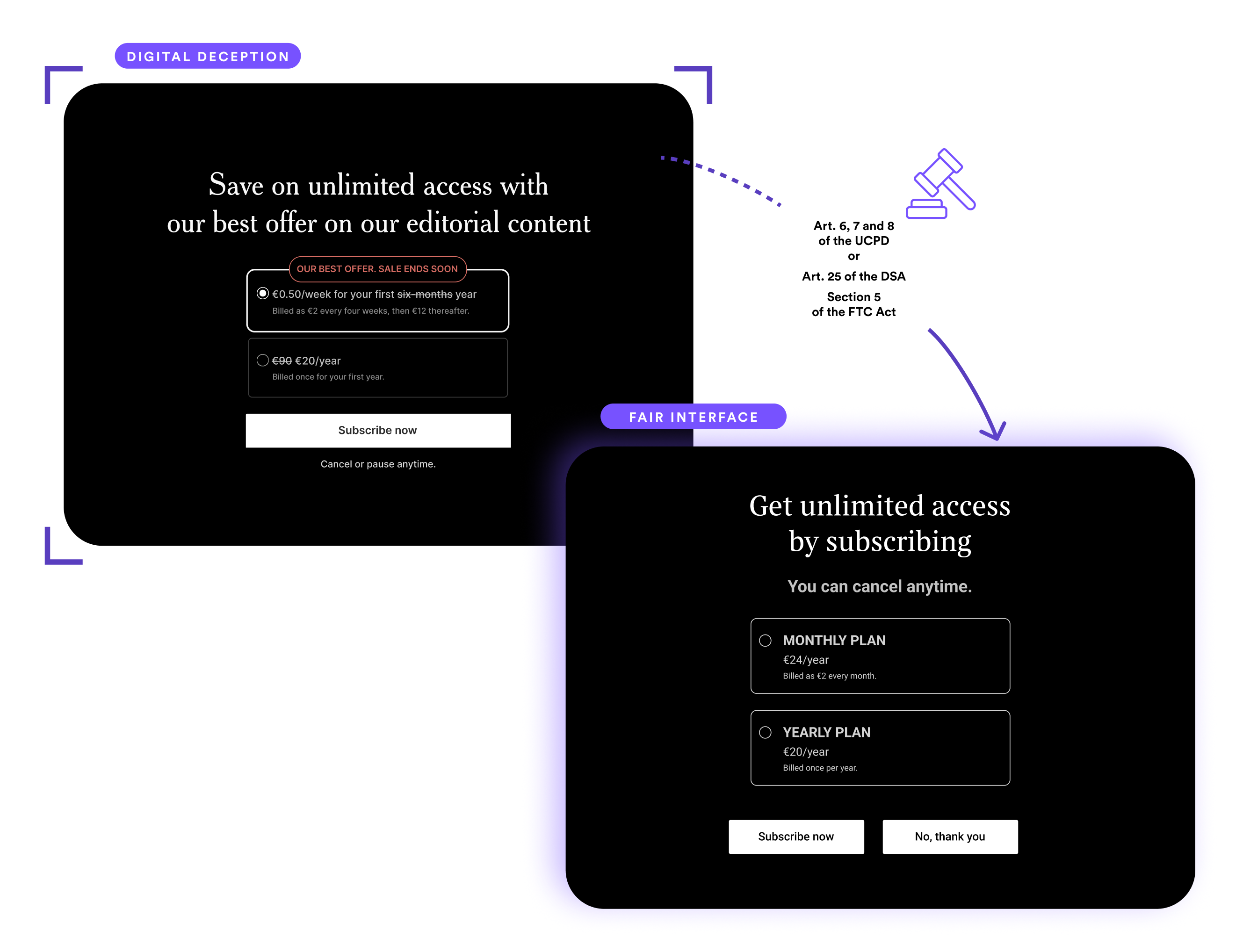

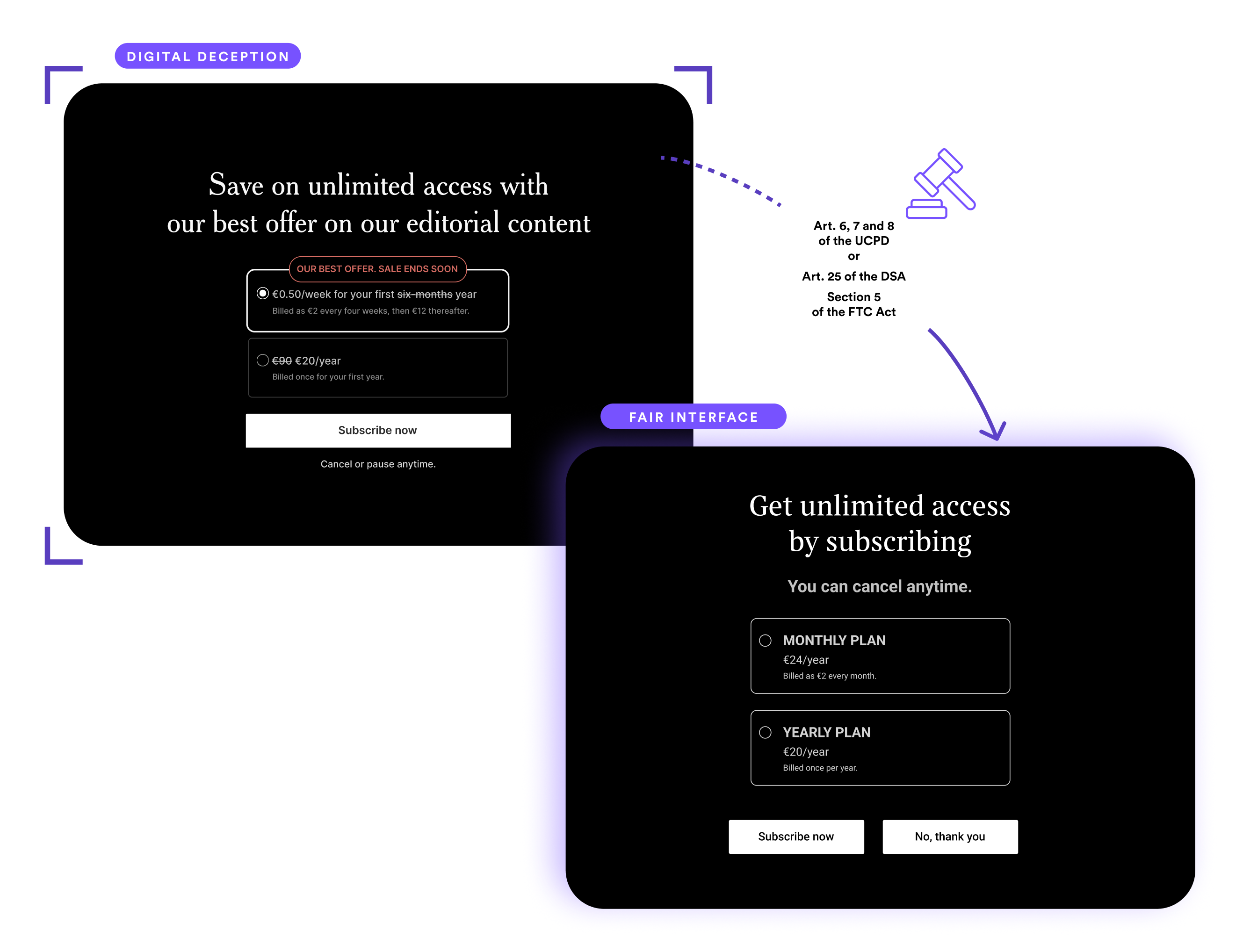

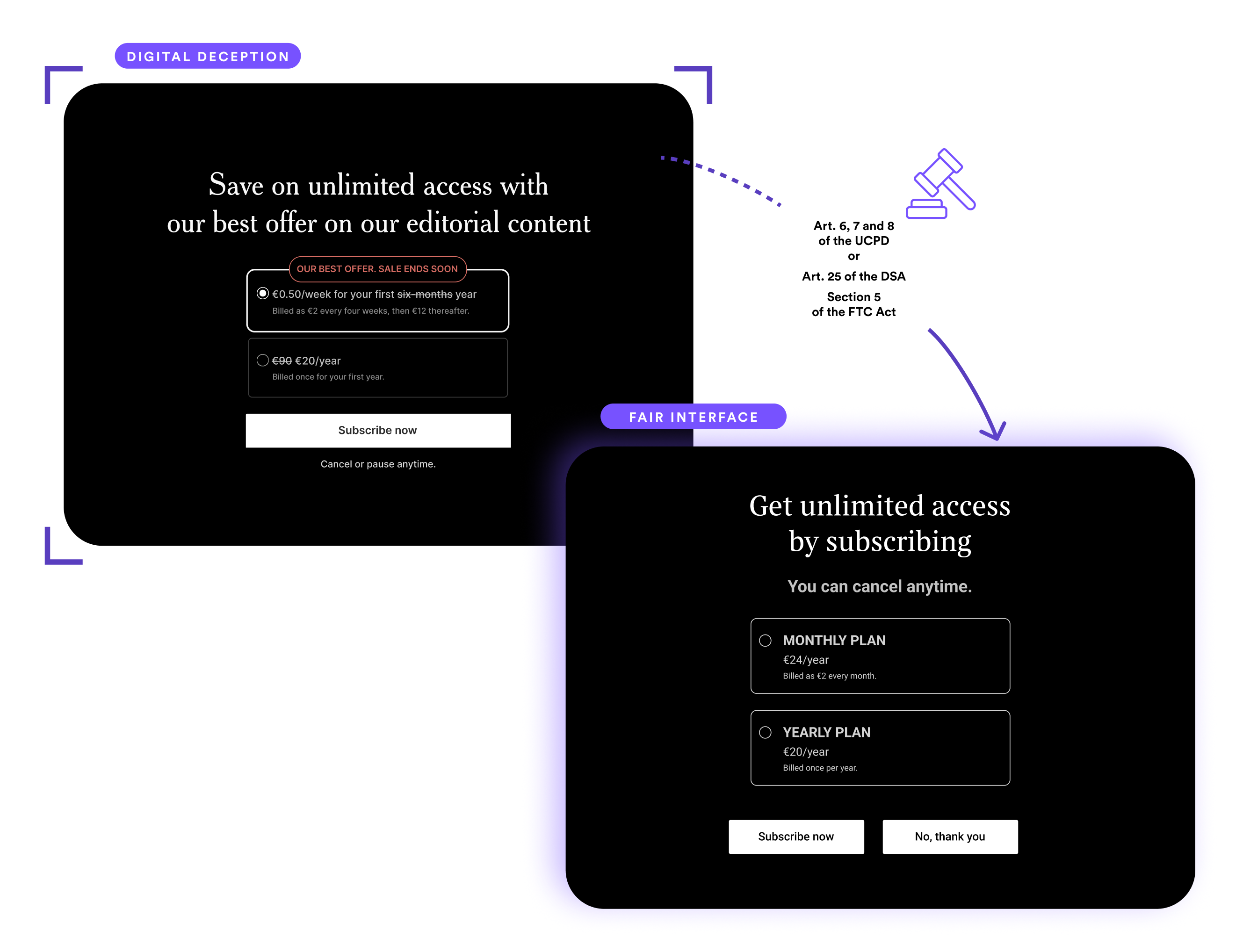

Ever wanted to prevent dark patterns from even becoming live? We've got you! That's why we created our Deceptive Design AI Agent. It empowers designers and developers to avoid creating new dark patterns at the mockup or integration stage.

Dark Pattern Screening

Our AI solution finds dark patterns and other digital violations on sites and app. It provides a risk prioritization and easily implemented design solutions.

Figma Plug-in

Our Figma Plug-in enables your designers to scan their designs at the mockup stage and fix any potential digital violations before they go live.

Consent Scanner

Gain instant insights on cookie practices across digital platforms, helping you uphold privacy standards and ensure compliance across websites.

Online Manipulation Observatory

We systematically scan sectors and key market players to detect, classify, and quantify dark patterns and digital compliance risks.

Fair User Lab

A range of qualitative, quantitative and eye tracking research services to understand if products use any problematic dark patterns

Hundreds of multimodal AI agents, each specialized, all orchestrated in context, analyzing interfaces, text, and code with a depth no single model can achieve.

Legal requirements don't belong in a PDF. FairPatterns embeds them directly into your tech and design workflow as safe, actionable interface patterns.

Training

Unlock the power of fair design with our comprehensive training programmes.

Deceptive design and digital harms uncovered

Detect deceptive UX and turn risk into responsibility.

Design for trust and compliance

Design for trust, compliance, and long-term impact.

Free resources

Publications

Research and articles about dark patterns, user behaviour and law.

Regulations

Laws and regulations from around the world that relate to dark patterns.

Cases

Legal cases and enforcement actions from around the world that relate to dark patterns.

Jobs

Employment opportunities that relate to dark patterns and related areas

Upgrade your compliance knowledge

Updated quarterly with curated new content.

Updated weekly with new research and cases.

Speaking & Media

Marie Potel-Saville, Co-Founder & CEO of FairPatterns, is available for keynote speeches and speaking engagements.

Expertise Areas

• Dark Patterns & Digital Manipulation

• AI Ethics & Trustworthy Design

• Digital Fairness Regulations (GDPR, DSA, Digital Fairness Act)

• Consumer Protection in Digital Ecosystems

• Ethical AI Implementation

Invite Marie to Speak

Bring ethical design to the stage with Marie’s keynote on dark patterns and user trust.

Fair Patterns Podcast and Blog

Exploring the intersection of ethics, design, and compliance in the digital world

How e-commerce sites manipulate you into buying

Stop big tech from making users behave in ways they don’t want to

Beyond Accuracy: Evaluating LLMs for Evidentiary Use in Legal Contexts

Awards & Associations

Meet Our Team

Our expert auditors, researchers, and legal professionals.